vLLM

vLLM

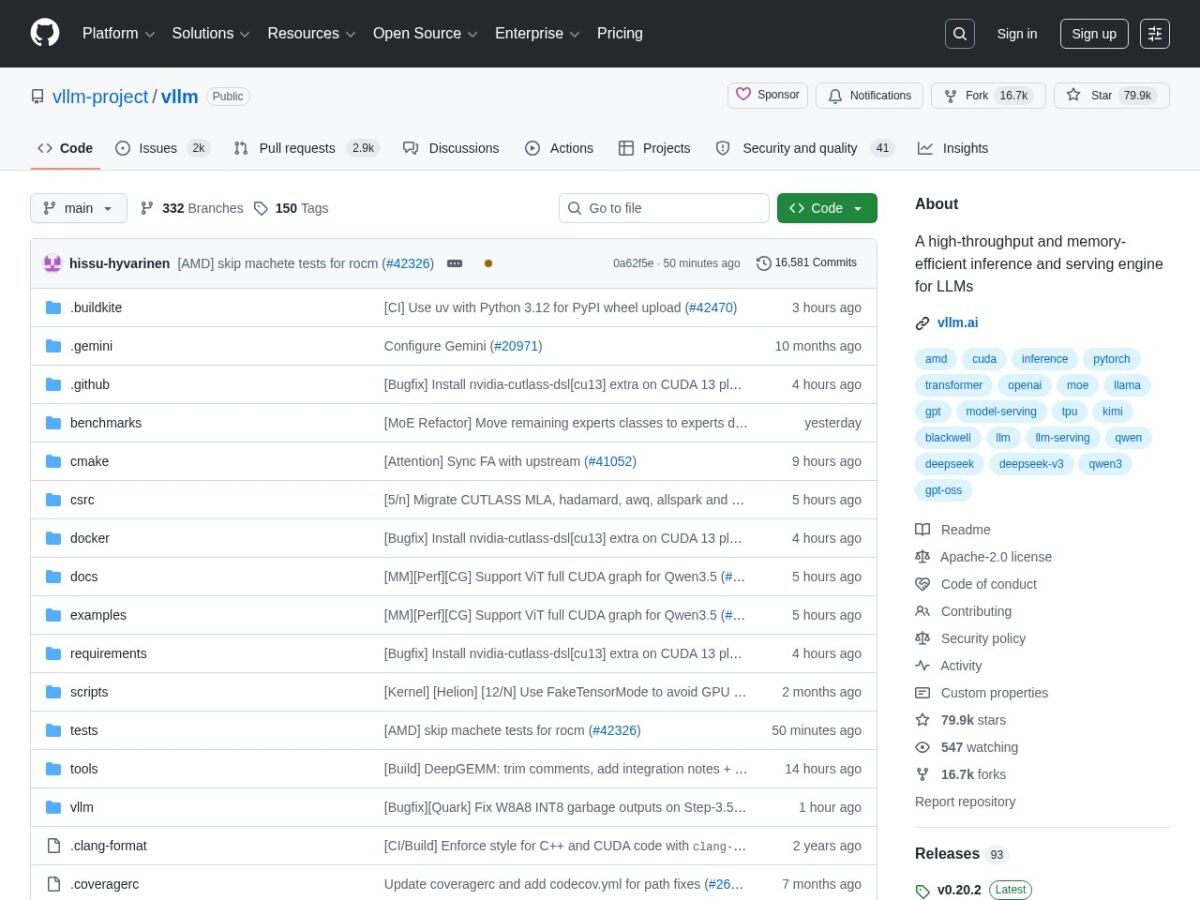

A high-throughput, memory-efficient serving engine for LLMs using PagedAttention.

Enterprise-Grade LLM Serving and Throughput

vLLM is a fast and easy-to-use library for LLM inference and serving, specifically designed for high-performance production environments. Its core innovation is PagedAttention, a new attention algorithm that manages KV cache memory with near-zero waste. This allows vLLM to achieve much higher throughput than traditional libraries like Hugging Face Transformers, making it significantly cheaper to run LLM services at scale.

The project is widely adopted by major AI cloud providers and enterprises because it can handle many concurrent requests efficiently. It supports a wide range of model architectures and integrates seamlessly with popular tools like Docker and Kubernetes. vLLM also features continuous batching, which ensures that the GPU is always busy processing tokens, further increasing efficiency. For any team moving from a prototype to a production-scale AI service that needs to serve thousands of users, vLLM is the gold standard for backend inference engines, providing the best balance of speed, ease of use, and hardware utilization.

An advanced AI research company providing high-performance open-source coding and chat models.