Replicate

Replicate

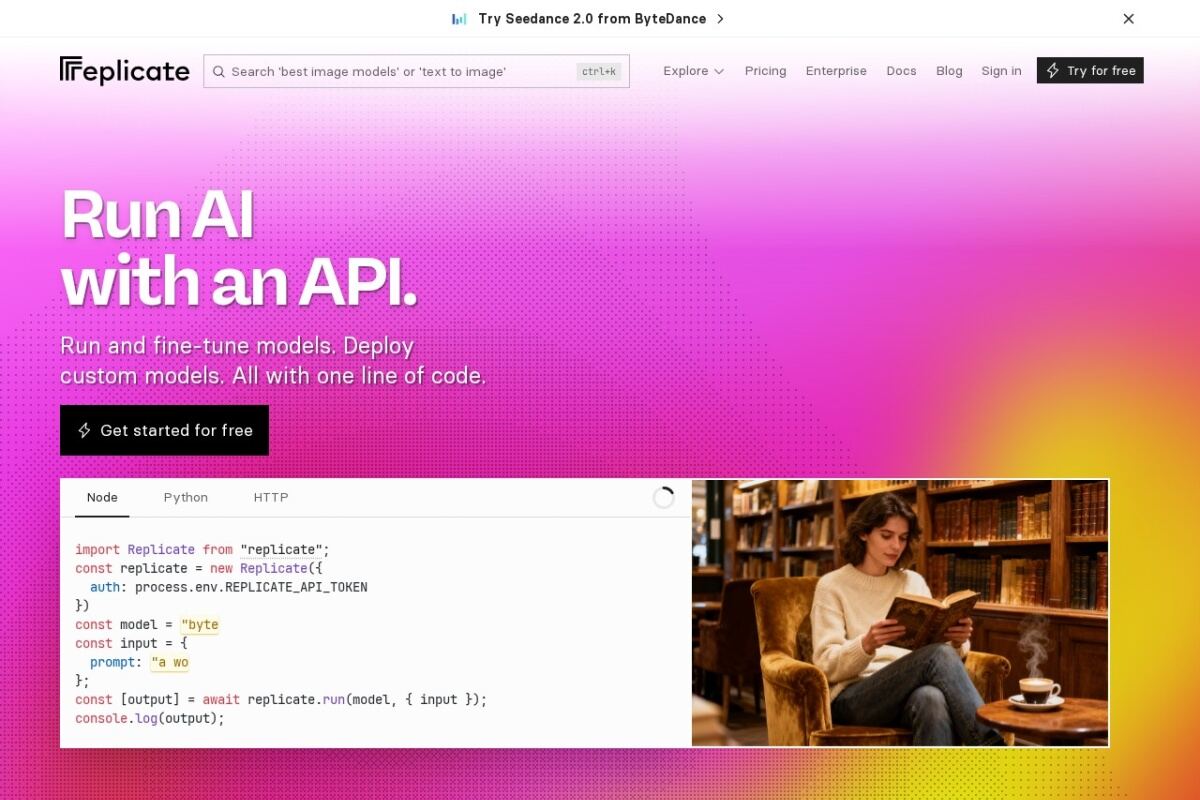

Run and deploy open-source machine learning models with a simple cloud API.

Seamless Model Deployment in the Cloud

Replicate removes the friction of setting up GPUs and managing complex Python environments. It allows developers to run open-source models—such as SDXL, Llama, and Whisper—using a single line of code or a REST API. The platform is built on the philosophy that you shouldn't need to be a DevOps expert to use AI. Each model is packaged into a standardized, scalable container that scales up or down based on your traffic.

The platform provides a clean web interface where users can test model parameters directly in the browser before integrating them into their applications. This is particularly useful for rapid prototyping. Replicate also supports fine-tuning, allowing users to train models on their own data without managing underlying infrastructure. Its billing model is typically usage-based, ensuring that you only pay for the compute time actually consumed during inference, making it cost-effective for startups and individual creators.

Get up and running with large language models locally on macOS, Linux, and Windows.